A Neural Network Allows The Robot To Imagine What A Person Thinks

A research team led by Professor Zeng Yi from the Institute of Automation of the Chinese Academy of Sciences has developed a neural network based on the theory of mind of multi-agent systems.

This system successfully describes the interactions of many agents with each other, their competition and cooperation. This is very important for building systems that involve many people and robots.

A Theory of Mind (TM) is a diagram that describes a person’s ability to guess other people’s mental states, such as beliefs, intentions, and desires. This is one of the highest level social cognitive abilities.

In recent years, the neural mechanisms underlying the Theory of Mind (TM) have gradually been uncovered. These mechanisms make it possible to study and explore social interactions between multi-agent systems and human-computer interactions.

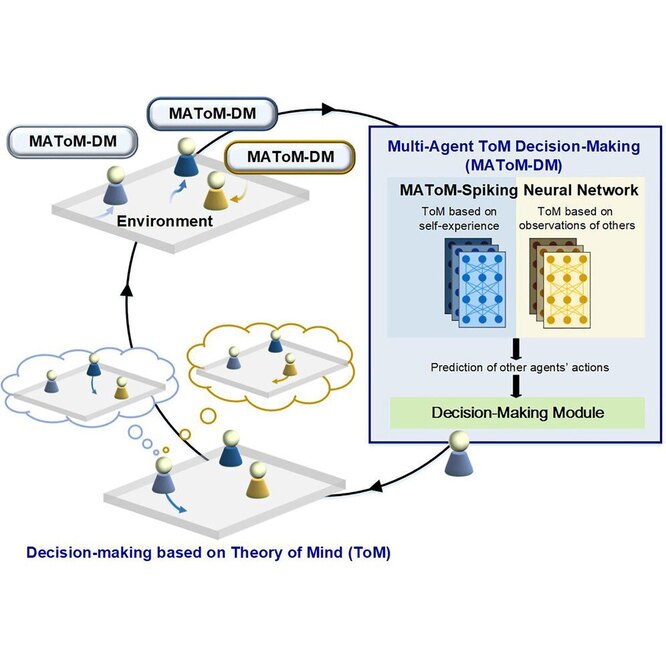

A research team led by Professor Zeng Yi from the Institute of Automation of the Chinese Academy of Sciences has developed a neural network (MAToM-SNN) based on the theory of mind of multi-agent systems. This system successfully describes the interactions of many agents with each other, their competition and cooperation. This is very important for building systems that involve many people and robots.

When an AI gets the functionality of a theory of mind, its agents can imagine the state of other agents, not just their own. Such systems work like a human community and are more reliable and safe for humans.

The proposed neural network consists of two modules: the Self-MAToM module for predicting the behavior of other agents (humans or robots) based on their own experience and the Other-MAToM module for predicting the behavior of other people based on historical observations of other people or robots.

“The predicted behavior of other agents using MAToM-SNN provides a more complete state representation for the decision model. This allows the decision network to adjust its policies,” says Professor Zeng Yi, co-author of the study. “Agents with MAToM-SNNs can use their experience of observing other agents to infer their future behavior and adjust their policies for better interaction.”

MAToM-SNN improves the performance of multi-agent systems in cooperative and competitive tasks. “MAToM-SNN demonstrates a high level of generalization in multi-agent reinforcement learning tasks based on recurrent neural networks,” says Zhao Zhuoya, co-author of the study.

Researchers have found that Self-MAToM helps Other-MAToM learn. “I” is a prerequisite for understanding others. “I” draws conclusions about others based on their own experience, when information about them is incomplete, the authors of the work note.

Source: A Neural Network Allows The Robot To Imagine What A Person Thinks

MIT’s Cybersecurity Metior: A Secret Weapon Against Side-Channel Attacks